Add Pools and expand capacity

Server pools help you expand the capacity of your existing MinIO cluster quickly and easily. This blog post focuses on increasing the capacity of one cluster, which is different from adding another cluster and replicating the same data across multiple clusters. When adding a server pool to an existing cluster, you increase the overall usable capacity of that cluster. If you have replication set up, then you will need to grow your replication target equally to accommodate the growth of the replication origin.

Server pools are an important concept in MinIO because they facilitate rapid storage capacity expansion. We recommend sizing a single-pool cluster for at least 2-3 years of storage capacity runway – and possibly more if you anticipate dramatic growth. This way you avoid adding unnecessary server pools and instead start with a simple MinIO cluster that grows simply over time. Even though server pools are easier to work with than individual nodes, they still add a tiny bit of management overhead. Once you've expanded, you should think about consolidating multiple pools into a few large ones by decommissioning the smaller pools.

In this post we’ll show you what you need to consider before expanding a server pool, how to create your initial pool and then later how to expand it by adding a new pool.

Build the Cluster

When setting up a server pool to expand the cluster there are certain prerequisites that need to be met in order to have the necessary specs for the additional pool.

Network and Firewall: The nodes in the new pool need to be able to talk to all the existing nodes in the cluster bi-directionally. All the new nodes must be listening on the same port as the existing ones. For example if you use port `9000` then the new pool must also communicate on `9000`. We also recommend using a load balancer such as Nginx or HAProxy for proxying the requests. Configure the routing algorithm to ensure traffic is routed based on least connections.

Sequential Hostnames: MinIO uses an expansion notation `{x...y}` to denote a sequential series of hostnames. It is therefore mandatory to name the new nodes in your pool in a sequential manner. If the existing nodes had these hostnames:

minio1.example.com

minio2.example.com

minio3.example.com

minio4.example.com

Then the new pool should have the following hostnames:

minio5.example.com

minio6.example.com

minio7.example.com

minio8.example.com

Be sure to create the DNS records for these hostnames prior to launching the new pool.

Sequential Drives: Similar to the hostnames, the drives need to be mounted in sequential order as well using the same expansion notation {x...y}. Here is an example of an /etc/fstab file.

You can then specify the entire range of drives with /mnt/disk{1...4}. If you want to use a specific sub-folder on each drive, specify it as /mnt/disk{1...4}/minio.

Erasure Code: As mentioned earlier, MinIO requires each server pool to satisfy the deployment parameters of existing clusters. Specifically the new pool topology must support a minimum of 2 x EC:N drives per erasure set, where EC:N is the standard parity storage class of the deployment. This requirement ensures the new server pool can satisfy the expected SLA of the deployment. For reference, this blog post explains how to use the Erasure Code Calculator to determine the number of disks and capacity you need. For an explanation of erasure coding, please see Erasure Coding 101.

Atomic Updates: You should also ensure that the new pool is homogeneous with the existing cluster as much as possible. It does not have to match spec for spec but the drives and network configuration need to be as close as possible to avoid potential edge case issues. Adding a new server pool requires restarting all MinIO nodes in the deployment at the same time. MinIO recommends restarting all nodes simultaneously. Do not perform rolling restarts (e.g. one node at a time), MinIO operations are atomic and strictly consistent. As such the restart procedure is non-disruptive to applications and ongoing operations.

Let's go ahead and build the cluster. In this example, we’ll build a Kubernetes cluster using KIND. We’ll use the following configuration to build a virtual 8-node cluster.

Add a label to the first 4 nodes into pool zero like so

Clone the MinIO operator’s tenant lite Kustomize configuration

Ensure tenant.yaml has a pool called pool-0 like below

Apply tenant configuration to launch pool-0

Check to make sure there are 4 pods in the pool

This is the initial setup that most folks start out with. This ensures you are set up in a way that sets you up to seamlessly expand in the future. Speaking of expanding pools, let's take a look how that would look like

Expand the Cluster

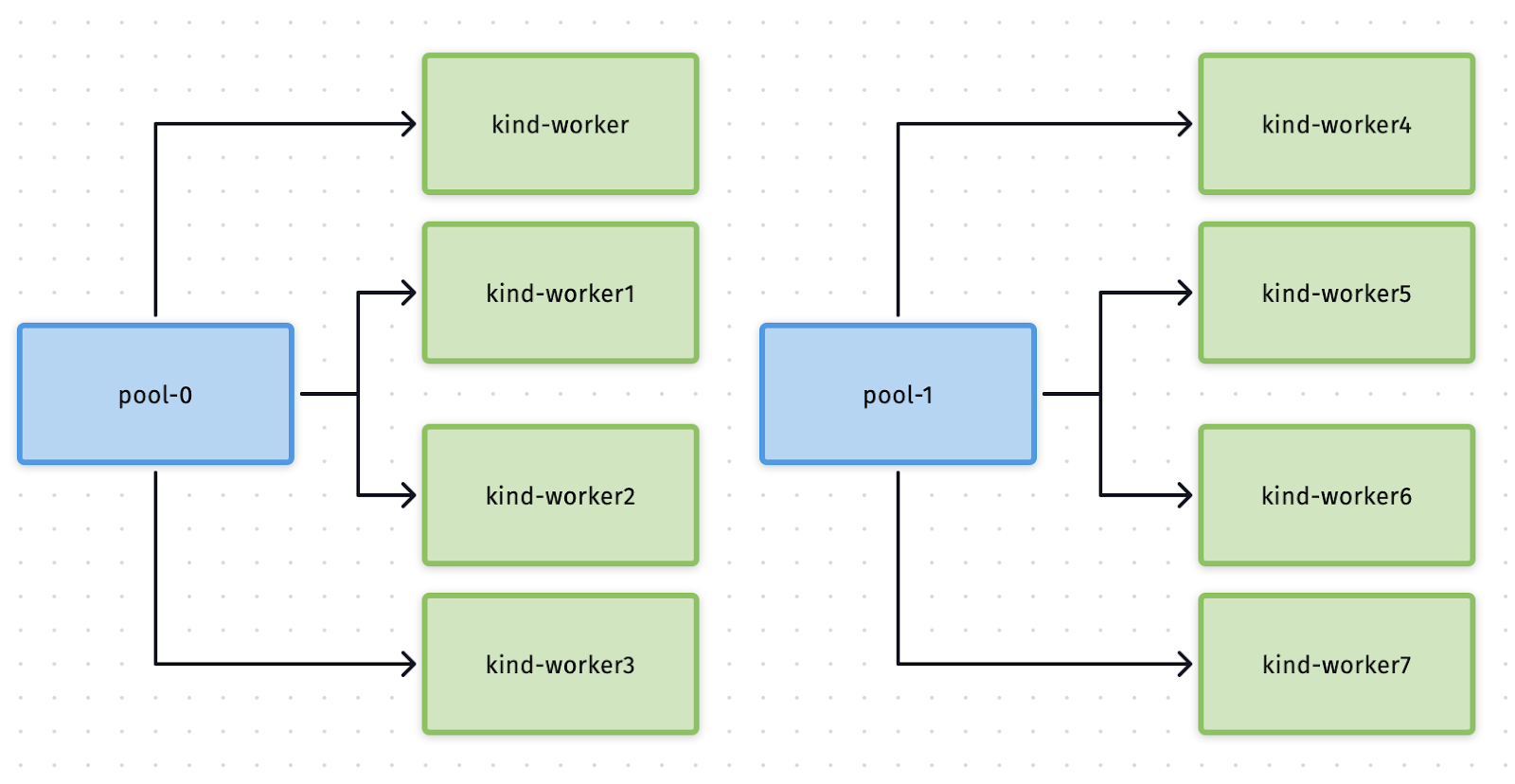

Expanding a pool is a non-disruptive operation which causes zero cluster downtime. Below is a diagram of what we intend to achieve as an end result.

In the above diagram on the left hand side we see pool-0 that has already been setup in the previous steps. In this section we’ll tackle adding pool-1 to expand the overall capacity of the cluster. You need to add 4 more similar nodes to pool-0 in order to expand into pool-1. We’ve already done this by launching an 8-node cluster to simplify the demo.

Edit the tenant-lite config to add pool-1

It should open a yaml file, find the pools section and add this below that.

As soon as you save the file, the new pool should be starting to deploy. Verify it by getting a list of pods.

There you have it. Wasn’t that a remarkably easy way to expand?

Ermahgerd Pools!

Server pools streamline ongoing operations of MinIO clusters. Pools allow you to expand your cluster on a moment's notice without having to move your data around to different clusters or re-balancing the cluster. Server pools enhance operational efficiency because they give storage admins a powerful shortcut in the ability to address an entire hardware cluster as a single resource.

While server pools are an excellent way to expand the capacity of your cluster, they should be used judiciously. We recommend that you size the cluster from Day One to have enough space for 3 years of anticipated growth so you don’t need to immediately start adding more pools. In addition, consider tiering before buying more capacity – tier off the old data to less expensive hardware and devote the latest and greatest hardware to storing the most recent and heavily accessed objects. If and when you have to expand the cluster by adding pools, have a game plan to eventually decommission the older pools and consolidate into a single large cluster per site. This will further lower the overhead required to keep your MinIO cluster running smoothly.

If you have any questions on how to add and expand server pools be sure to reach out to us on Slack!