The MinIO DataPod: A Reference Architecture for Exascale

The modern enterprise defines itself by its data. This requires a data infrastructure for AI/ML as well as a data infrastructure that is the foundation for a Modern Datalake capable of supporting business intelligence, data analytics, and data science. This is true if they are behind, getting started or using AI for advanced insights. For the foreseeable future, this will be the way that enterprises are perceived. There are multiple dimensions or stages to the larger problem of how AI goes to market in the enterprise. Those include data ingestion, transformation, training, inferencing, production, and archiving, with data shared across each stage. As these workloads scale the complexity of the underlying AI data infrastructure increases. This creates the need for high performance infrastructure while minimizing total cost of ownership (TCO).

MinIO has created a comprehensive blueprint for data infrastructure to support exascale AI and other large scale data lake workloads. It is called the MinIO DataPod. The unit of measurement it uses is 100 PiB. Why? Because the reality is that this is common today in the enterprise. Here are some quick examples:

- A North American automobile manufacturer with nearly an exabyte of car video

- A German automobile manufacturer with more than 50 PB of car telemetry

- A biotech firm with more than 50 PB of biological, chemical, & patient-centric data

- A cybersecurity company with more than 500 PB of log files

- A media streaming company with more than 200 PB of video

- A defense contractor with more than 80 PB of geospatial, log and telemetry data from aircraft

Even if they are not at 100 PB today, they will be within a few quarters. The average firm is growing at 42% a year, data-centric firms are growing at twice that rate, if not more.

The MinIO Datapod reference architecture can be stacked in different ways to achieve almost any scale - indeed we have customers that have built off of this blueprint - all the way past an exabyte and with multiple hardware vendors. The MinIO DataPod offers an end-to-end architecture that enables infrastructure administrators to deploy cost-efficient solutions for a variety of AI and ML workloads. Here is the rationale for our architecture.

AI Requires Disaggregated Storage and Compute

AI workloads, especially generative AI, inherently require GPUs for compute. They are spectacular devices with incredible throughput, memory bandwidth and parallel processing capabilities. Keeping up with GPUs that are getting faster and faster requires high-speed storage. This is especially true when training data cannot fit into memory and training loops have to make more calls to storage. Furthermore, enterprises require more than performance, they also need security, replication, and resiliency.

The enterprise storage requirement demands that the architecture fully disaggregate storage from compute. This allows for storage to scale independently of the compute and given that storage growth is generally one or more orders of magnitude more than compute growth, this approach ensures the best economics through superior capacity utilization.

AI Workloads Demand a Different Class of Networking

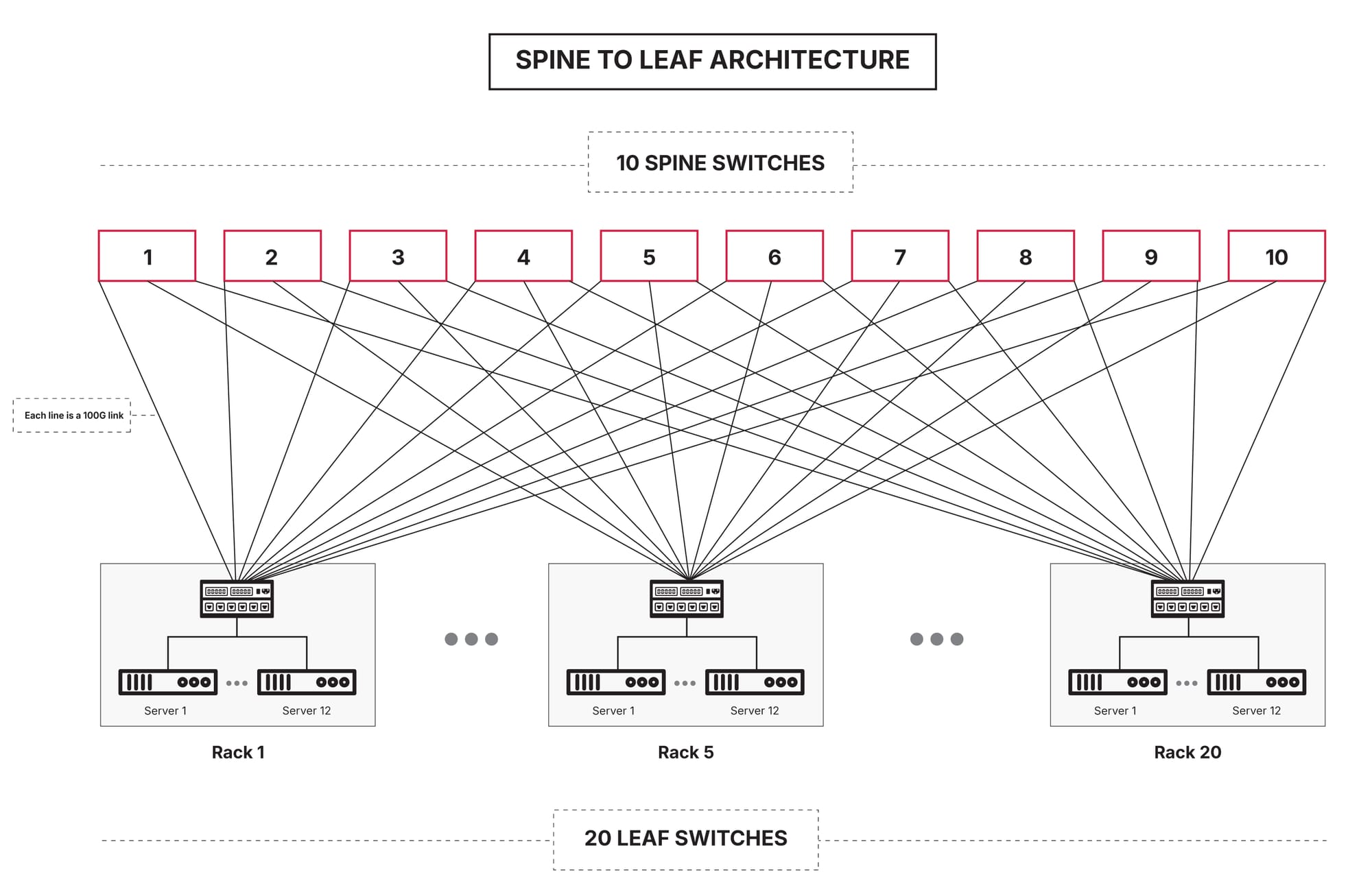

Networking infrastructure has standardized on 100 Gigabits per second (Gbps) bandwidth links for AI workload deployments. Modern day NVMe drives provide 7GBps throughput on average making the network bandwidth between the storage servers and the GPU compute servers the bottleneck for AI pipeline execution performance.

Solving this problem with complex networking solutions like Infiniband (IB) has real limitations. We recommend that enterprises leverage existing, industry-standard Ethernet-based solutions (e.g., HTTP over TCP) that work out of the box to deliver data at high throughput for GPUs for the following reasons:

- Much larger and open ecosystem

- Reduced network infrastructure cost

- High interconnect speeds (800 GbE and beyond) with RDMA over ethernet support (i.e.: RoCEv2)

- Reuse existing expertise and tools in deploying, managing, and observing ethernet

- Innovation around GPUs to storage server communication is happening on ethernet based solutions

The Requirements of AI Demand Object Storage

It is not a coincidence that AI data infrastructure in public clouds are all built on top of object stores. Nor is it a coincidence that every major foundational model was trained on an object store. This is a function of the fact that POSIX is too chatty to work at the data scale required by AI - despite what the chorus of legacy filers will claim.

The same architecture that delivers AI in the public cloud should be applied to the private cloud and obviously the hybrid cloud. Object stores excel at handling various data formats and large volumes of unstructured data and can effortlessly scale to accommodate growing data without compromising performance. Their flat namespace and metadata capabilities enable efficient data management and processing that is crucial for AI tasks requiring fast access to large datasets.

As high-speed GPUs evolve and network bandwidth standardizes at 200/400/800 Gbps and beyond, modern object stores will be the only solution that meets the performance SLAs and scale of AI workloads.

Software Defined Everything

We know that GPUs are the star of the show and that they are hardware. But even Nvidia will tell you the secret sauce is CUDA. Move outside the chip, however, and the infrastructure world is increasingly software-defined. Nowhere is this more true than storage. Software-defined storage solutions are essential for scalability, flexibility, and cloud integration, surpassing traditional appliance-based models for the following reasons:

- Cloud Compatibility: Software-defined storage aligns with cloud operations, unlike appliances that cannot run across multiple clouds.

- Containerization: Appliances cannot be containerized, losing cloud-native advantages and preventing Kubernetes orchestration.

- Hardware Flexibility: Software-defined storage supports a wide range of hardware, from edge to core, accommodating diverse IT environments.

- Adaptive Performance: Software-defined storage offers unmatched flexibility, efficiently managing different capacities and performance needs across various chipsets.

At exabyte scale, simplicity and a cloud-based operating model are crucial. Object storage, as a software-defined solution, should work seamlessly on commodity off-the-shelf (COTS) hardware and any compute platform, be it bare metal, virtual machines, or containers.

Custom-built hardware appliances for object storage often compensate for poorly designed software with costly hardware and complex solutions, resulting in a high total cost of ownership (TCO).

MinIO DataPOD Hardware Specification for AI:

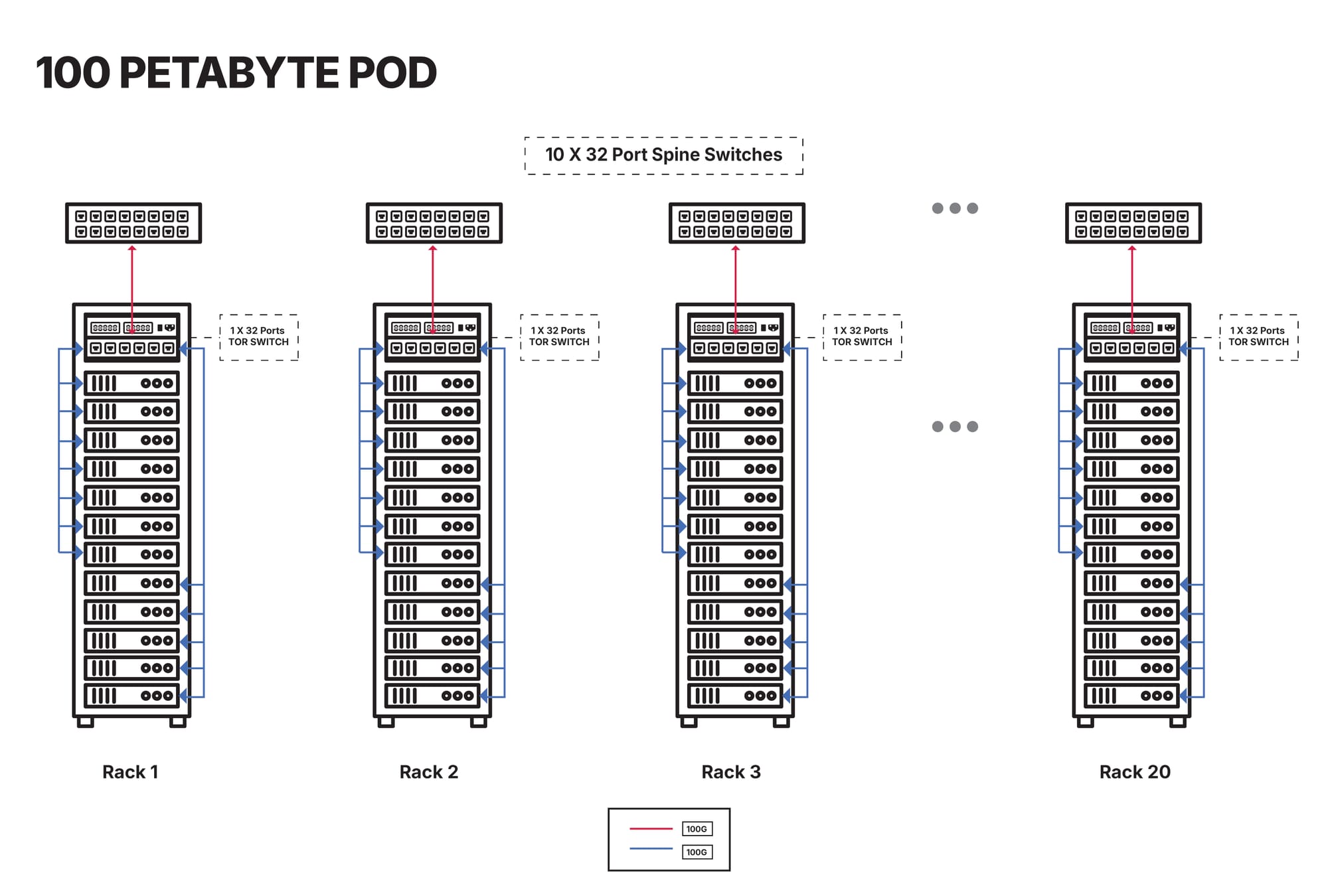

Enterprise customers using MinIO for AI initiatives build exabyte scale data infrastructure as repeatable units of 100PiB. This helps infrastructure administrators ease the process of deployment, maintenance and scaling as the AI data grows exponentially over a period of time. Below is the bill of materials (BOM) for building a 100PiB scale data infrastructure.

Cluster Specification

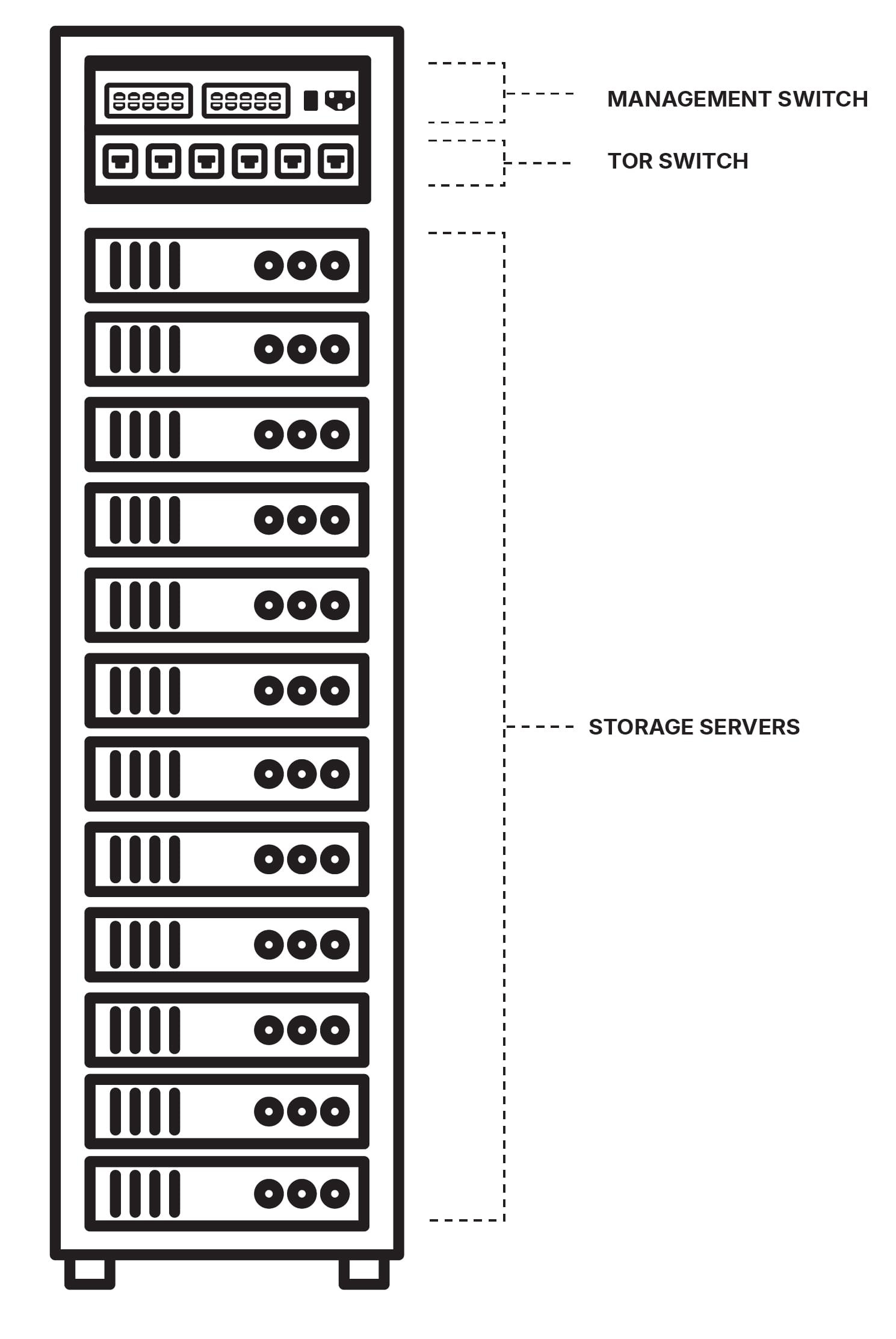

Single Rack Specification

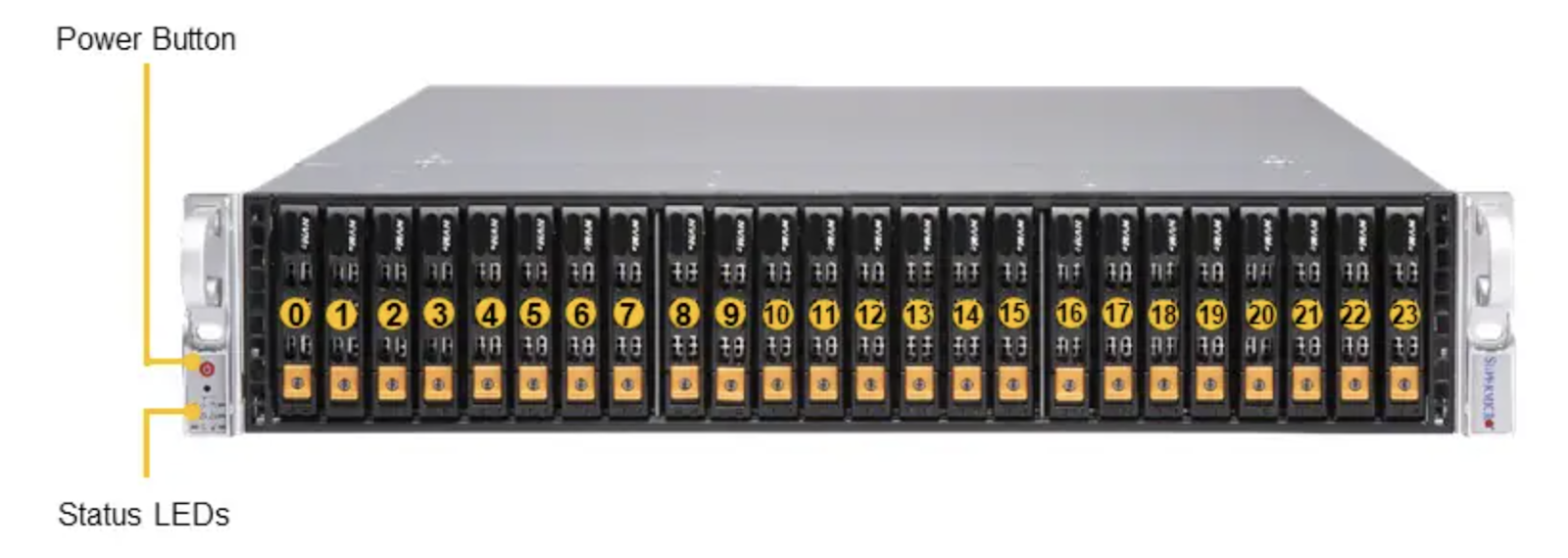

Storage Server Specification

Storage Server Reference

Network Switch Specification

Price

MinIO has validated this architecture with multiple customers and would expect others to see the following average price per terabyte per month. This is an average street price and the actual price may vary depending on the configuration and the hardware vendor relationship.

Vendor specific turnkey hardware appliances for AI will result in high TCO and is not scalable from an unit economics standpoint for large data AI initiatives at exabyte scale.

Conclusion

Data Infrastructure setup at exabyte scale while meeting the TCO objectives for all AI/ML workloads can be complex and hard to get right. MinIO’s DataPOD infrastructure blueprint makes it simple and straightforward for Infrastructure administrators to set up the required commodity off the shelf hardware with highly scalable, performant cost effective S3 compatible AIStor resulting in improved overall time-to-market and faster time to value from AI initiatives across organizations within the enterprise landscape.